Topics at a Glance

The core areas of my work:

Thinking, Deciding, Communication, Using AI and Evaluating Information,

each with its underlying mechanics, practical relevance and scientific foundation.

This page brings together the core areas of my work and organises them by mechanics, practical application and scientific grounding. Deeper dives are available in expandable sections, so you can get a quick overview and go into detail where it's useful.

Choose your entry point, scroll to the topic areas or jump straight to the scientific foundation.

Topic Areas

Critical Thinking

How judgements form, when they go wrong and how clarity can be stabilised under pressure.

Communicating

How language, expectations and handovers can be structured so that collaboration becomes more reliable.

Deciding

How criteria, decision rights and protocols increase pace without sacrificing quality.

Using AI

How AI scales pace without giving up judgement, with clear checks and boundaries.

Evaluating Information

How sources, evidence and uncertainty are examined to reduce faulty conclusions and risk.

Critical Thinking

Our thinking determines how problems get framed, information gets weighted and decisions get made. In complex situations, quality rarely breaks down because of missing knowledge. It breaks down because of unnoticed assumptions and cognitive shortcuts.

Typical Situations

Arguments seem plausible but are logically shaky (e.g. false causality, false certainty, overgeneralisation)

Uncertainty gets ignored or converted into false precision instead of being named and contextualised clearly

Under time pressure, thinking gets oversimplified or decisions drift through excessive delay

Strong evidence gets over- or underweighted because intuition, status or availability (whatever happens to be salient) skews the assessment

What Changes

Fewer cognitive distortions, because assumptions, alternatives and counterarguments are systematically examined

More robust judgements under pressure, because brief reflection checks replace spontaneous shortcuts

Better learning curves, because recurring errors become visible and decisions can be reviewed retrospectively

Clearer priorities, because uncertainty is made explicit and arguments are weighted by relevance and evidence rather than by who speaks loudest

Tools & Standards

Dual-Process Checks (intuitive vs. analytical)

Metacognitive questions (thinking about your own thinking)

Bias and Noise Checks (systematic distortion and random variance)

Problem Framing (pinpointing the real question: "What is this actually about?")

Pre-Mortem (anticipating failure: "How could this go wrong?")

Confidence Calibration ("How certain are we really?")

For you:

-

Critical, analytical and creative thinking are among the most important core competencies worldwide according to current labour market analyses through to 2030. In practice, this means problems get framed more precisely, assumptions get tested, distortions get reduced and ambiguity gets translated into concrete options.

A central mechanism is the interplay between intuitive thinking (System 1) and analytical thinking (System 2). When both modes are used consciously, teams reduce errors in judgement, weigh risk more consistently and make decisions that remain traceable in hindsight. Under pressure, micro-routines prevent snap decisions and decision drift, which reduces rework, conflict and escalation.

Metacognition (thinking about one’s own thinking) makes judgement more robust. Knowing what is known and where uncertainty remains allows teams to review outcomes systematically, name influencing factors and avoid repeating the same mistakes. Progress becomes measurable, not just felt.

For businesses, critical thinking becomes a performance factor. Decisions get clearer, faster and more consistent, particularly in dynamic, AI-shaped environments. Targeted training and shared standards reduce cognitive error patterns and improve the quality of complex decisions over time.

-

Albrecht, L. B. (2025). Kritisches Denken in der Chemielehrer*innenbildung: Eine konzeptionell-empirische Studie zur Integration und Operationalisierung. Logos Verlag Berlin. https://doi.org/10.30819/5970

Berthet, V. (2022). The Impact of Cognitive Biases on Professionals’ Decision-Making: A Review of Four Occupational Areas. Frontiers in Psychology, 12, 802439. https://doi.org/10.3389/fpsyg.2021.802439

Carucci, R. (2024, Februar 6). In The Age Of AI, Critical Thinking Is More Needed Than Ever. Forbes. https://www.forbes.com/sites/roncarucci/2024/02/06/in-the-age-of-ai-critical-thinking-is-more-needed-than-ever/

Larrick, R. P., & Feiler, D. C. (2015). Expertise in Decision Making. In G. Keren & G. Wu (Hrsg.), The Wiley Blackwell Handbook of Judgment and Decision Making (1. Aufl., S. 696–721). Wiley. https://doi.org/10.1002/9781118468333.ch24

Knight, A. T., Cowling, R. M., Rouget, M., Balmford, A., Lombard, A. T., & Campbell, B. M. (2008). Knowing but not doing: Selecting priority conservation areas and the research-implementation gap. Conservation biology : the journal of the Society for Conservation Biology, 22(3), 610–617. https://doi.org/10.1111/j.1523-1739.2008.00914.x

Marr, B. (2023, Februar 14). The Top 10 In-Demand Skills For 2030. Forbes. https://www.forbes.com/sites/bernardmarr/2022/08/22/the-top-10-most-in-demand-skills-for-the-next-10-years/

Midtgård, K., & Selart, M. (2025). Cognitive Biases in Strategic Decision-Making. Administrative Sciences, 15(6), 227. https://doi.org/10.3390/admsci15060227

Morewedge, C. K., Yoon, H., Scopelliti, I., Symborski, C. W., Korris, J. H., & Kassam, K. S. (2015). Debiasing Decisions: Improved Decision Making With a Single Training Intervention. Policy Insights from the Behavioral and Brain Sciences, 2(1), 129–140. https://doi.org/10.1177/2372732215600886

Theodorakopoulos, L., Theodoropoulou, A., & Halkiopoulos, C. (2025). Cognitive Bias Mitigation in Executive Decision-Making: A Data-Driven Approach Integrating Big Data Analytics, AI, and Explainable Systems. Electronics, 14(19), 3930. https://doi.org/10.3390/electronics14193930

World Economic Forum. (2025). Future of Jobs Report 2025—Insight Report. World Economic Forum. https://reports.weforum.org/docs/WEF_Future_of_Jobs_Report_2025.pdf

Young, R. (2023, August 28). The Power Of Critical Thinking: Enhancing Decision-Making And Problem-Solving. Forbes. https://www.forbes.com/councils/forbescoachescouncil/2023/07/28/enhancing-decision-making-and-problem-solving/

Scientific foundation: Evidence & Sources ↓

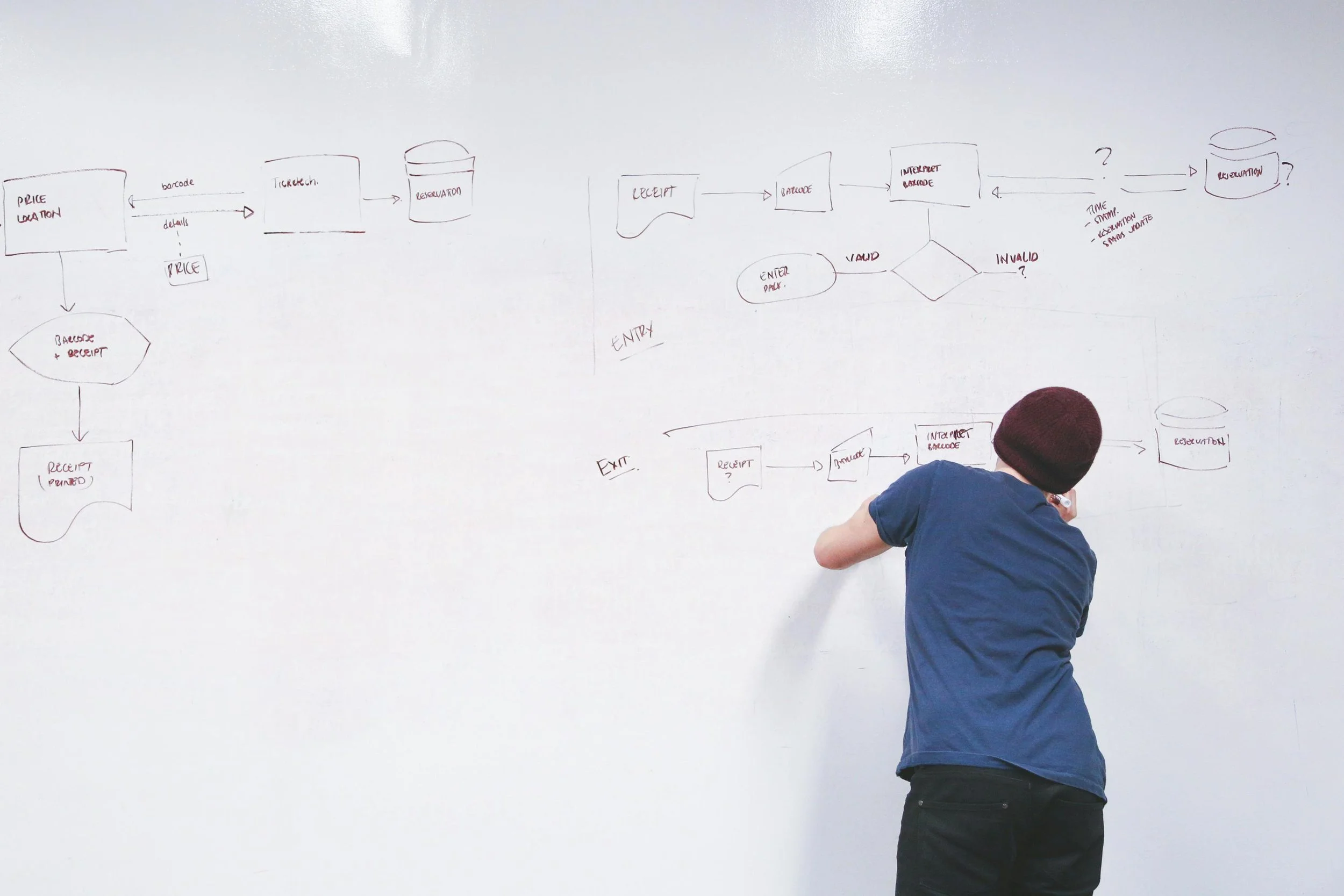

Deciding

Deciding doesn't mean "committing to something." It means structuring options, evidence, risks and priorities so that a close becomes possible and holds up in execution. In complex contexts, quality rarely fails because of a lack of expertise. It fails because of soft criteria, shifting standards and the inability to reach a clean close.

Typical Situations

Decisions drag because criteria stay implicit or keep getting renegotiated

Risks get either smoothed over or blown out of proportion, blocking a clean close

Discussions go in circles because options aren't set up to be compared

After the meeting, nobody is quite sure what was actually decided or what comes next

Stakeholder input overturns decisions after the fact because the framework wasn't solid to begin with

What Changes

Faster closes, because criteria, decision rights and stop rules are clear

More robust decisions, because bias and noise are reduced

Fewer loops, because options are made comparable and trade-offs are made explicit

Higher follow-through, because decisions are documented, retrievable and ready to act on

Better escalations, because conflicts and risks surface earlier rather than exploding later

Tools & Standards

Decision Briefing (decision template: problem, options, criteria, risks, recommendation)

Criteria Set + weighting (stable standards instead of gut feel)

Pre-Mortem ("How could this go wrong?")

Decision Log (decision protocol: decision, rationale, accountable person, next steps)

Checkpoints (decision milestones: when to review, when to re-decide)

Confidence Calibration ("How certain are we really?")

For you:

-

Good decisions rarely come from more discussion. They come from better structure. In complex organisations, decision quality typically breaks down where criteria stay implicit, accountability blurs and decisions are not documented in a way that holds up. The result is coordination loops, rework and decisions that go soft under pressure.

The central lever is standardisation where it matters. Recurring decision types get clear criteria, a brief protocol and a clean logic for who decides, who provides input and what counts as good enough. Intuition is not replaced, it is calibrated. Quick calls get backed by a few robust checks and stay traceable, even under time pressure.

A second lever is reducing bias and noise, systematic distortion and random variance in judgements. When criteria are explicit and decisions are documented consistently, the likelihood that mood, stakeholder pressure or the state of the day drives outcomes more than evidence and reasoning drops significantly. Empirical research suggests that structured decision processes increase the coherence and speed of strategic decisions and improve consistency over time.

With the WEF projecting that around 39% of all core competencies will shift by 2030, a stable decision framework becomes infrastructure for quality under uncertainty. Teams can prioritise faster, weigh risks more cleanly and make decisions repeatably, instead of renegotiating from scratch every time.

-

Berthet, V. (2022). The Impact of Cognitive Biases on Professionals’ Decision-Making: A Review of Four Occupational Areas. Frontiers in Psychology, 12, 802439. https://doi.org/10.3389/fpsyg.2021.802439

Dean, J. W., & Scharfman, M. P. (1996). Does Decision Process Matter? A Study of Strategic Decision-Making Effectiveness. Academy of Management Journal, 39(2), 368–396.

Knight, A. T., Cowling, R. M., Rouget, M., Balmford, A., Lombard, A. T., & Campbell, B. M. (2008). Knowing but not doing: Selecting priority conservation areas and the research-implementation gap. Conservation biology : the journal of the Society for Conservation Biology, 22(3), 610–617. https://doi.org/10.1111/j.1523-1739.2008.00914.x

Midtgård, K., & Selart, M. (2025). Cognitive Biases in Strategic Decision-Making. Administrative Sciences, 15(6), 227. https://doi.org/10.3390/admsci15060227

Milkman, K. L., Chugh, D., & Bazerman, M. H. (2009). How Can Decision Making Be Improved? Perspectives on Psychological Science, 4(4), 379–383. https://doi.org/10.1111/j.1745-6924.2009.01142.x

Morewedge, C. K., Yoon, H., Scopelliti, I., Symborski, C. W., Korris, J. H., & Kassam, K. S. (2015). Debiasing Decisions: Improved Decision Making With a Single Training Intervention. Policy Insights from the Behavioral and Brain Sciences, 2(1), 129–140. https://doi.org/10.1177/2372732215600886

Theodorakopoulos, L., Theodoropoulou, A., & Halkiopoulos, C. (2025). Cognitive Bias Mitigation in Executive Decision-Making: A Data-Driven Approach Integrating Big Data Analytics, AI, and Explainable Systems. Electronics, 14(19), 3930. https://doi.org/10.3390/electronics14193930

World Economic Forum. (2025). Future of Jobs Report 2025—Insight Report. World Economic Forum. https://reports.weforum.org/docs/WEF_Future_of_Jobs_Report_2025.pdf

Young, R. (2023, August 28). The Power Of Critical Thinking: Enhancing Decision-Making And Problem-Solving. Forbes. https://www.forbes.com/councils/forbescoachescouncil/2023/07/28/enhancing-decision-making-and-problem-solving/

Scientific foundation: Evidence & Sources ↓

Communicating

Communication is the infrastructure of collaboration. It determines whether information is understood, whether expectations are clear and whether decisions hold up in execution.

In complex working environments, quality rarely breaks down for lack of communication. It breaks down because responsibilities are unclear, assumptions stay implicit and standards for coordination and handovers are missing.

Typical Situations

Content gets discussed but understood differently, because terms, goals or priorities haven't been made concrete

Tasks and handovers stay vague, leading to follow-up questions, parallel work and rework

Conflicts surface late, because problems end up in side channels or get socially defused for too long

Meetings generate activity but no clear decisions, because expectations, input and completion criteria are missing

Leadership communication feels inconsistent, because messages vary by situation and trust erodes as a result

What Changes

Fewer misunderstandings, because goals, terms, responsibilities and completion criteria are made explicit

Greater accountability, because commitments, next steps and handovers are documented and verifiable

Fewer escalations, because tensions become visible earlier and clarification can happen in a structured way

Faster coordination, because alignment runs through standards rather than repeated back-and-forth

More psychological safety, because contributions, objections and mistakes get raised earlier without status games dominating

Tools & Standards

Expectation Setting (goal, context, decision vs. update, desired output)

Definition of Done (when something counts as finished) + Next Step + deadline

Handover Standard (owner, context, next steps, dependencies, checkpoint)

Meeting Standards (purpose, roles, input in advance, decision logic, close and protocol)

Conflict Clarification Format (role, concern, decision point, boundaries, concrete agreement)

Communication Templates (short memos and briefings covering problem, goal, options, recommendation and risks)

For you:

-

Effective communication is less about rhetoric and more about standardisation where it matters. When goals, roles and expectations are not explicit, the system fills the gaps with assumptions, interpretations, status games and side conversations. That is where the costs accumulate. Work gets done twice, decisions slow down, conflicts are addressed too late and accountability stays diffuse.

A robust communication framework reduces this variance by stabilising three things. Shared terms and priorities, clear responsibilities and handovers and reliable completion criteria. Research shows that this kind of structuring measurably improves collaboration and reduces friction losses, because less energy goes into clarification loops and more goes into execution.

In teams, the effect often shows up immediately. Fewer follow-up questions, less rework, fewer escalations and noticeably greater accountability. At the same time, psychological safety increases, because objections and uncertainties can be raised earlier without the risk of losing face. People who share ideas, address mistakes early and take ownership need a framework where that is genuinely safe.

Communication is therefore not a soft skill. It is infrastructure and with that a core driver of productivity, quality and culture, one that shows up directly in decision speed, coordination overhead and trust.

-

Costa, A. C., Roe, R. A., & Taillieu, T. (2001). Trust within teams: The relation with performance effectiveness. European Journal of Work and Organizational Psychology, 10(3), 225–244. https://doi.org/10.1080/13594320143000654

Detert, J. R., & Burris, E. R. (2007). Leadership Behavior and Employee Voice: Is the Door Really Open? Academy of Management Journal, 50(4), 869–884. https://doi.org/10.5465/amj.2007.26279183

Edmondson, A. (1999). Psychological Safety and Learning Behavior in Work Teams. Administrative Science Quarterly, 44(2), 350–383. https://doi.org/10.2307/2666999

Grossman, D. (2011, Juli 17). The Cost Of Poor Communications. Holmes Report. https://www.provokemedia.com/latest/article/the-cost-of-poor-communications

Katebi, A., Saheli, A. M., Aghaei, H., & Ahmadi, Q. (2024). Meta-Analysis of Communication and Organizational Performance: Moderating Effects of Research Subject, Country and Year of Publication. Journal of System Management (JSM), 10(1), 33–48. https://doi.org/10.30495/JSM.2023.1981650.1789

Lepine, J. A., & Piccolo, R. F. (2008). A Meta-Analysis of Teamwork Processes: Tests of a Multidimensional Model and Relationships with Team Effectiveness Criteria. Personnel Psychology, 61, 273–307.

Lilienfeld, S. O., Ammirati, R., & Landfield, K. (2009). Giving Debiasing Away: Can Psychological Research on Correcting Cognitive Errors Promote Human Welfare? Perspectives on Psychological Science, 4(4), 390–398. https://doi.org/10.1111/j.1745-6924.2009.01144.x

Mesmer-Magnus, J. R., & DeChurch, L. A. (2009). Information sharing and team performance: A meta-analysis. Journal of Applied Psychology, 94(2), 535–546. https://doi.org/10.1037/a0013773

Tourish, D., & Robson, P. (2006). Sensemaking and the Distortion of Critical Upward Communication in Organizations. Journal of Management Studies, 43(4), 711–730. https://doi.org/10.1111/j.1467-6486.2006.00608.x

Wiese, C. W., Burke, C. S., Tang, Y., Hernandez, C., & Howell, R. (2022). Team Learning Behaviors and Performance: A Meta-Analysis of Direct Effects and Moderators. Group & Organization Management, 47(3), 571–611. https://doi.org/10.1177/10596011211016928

Scientific foundation: Evidence & Sources ↓

Using Artificial Intelligence (AI)

AI speeds up work, but it doesn't replace judgement. In practice, quality breaks down where models sound plausible but create false certainty, and where people quietly delegate responsibility to tools. The lever isn't "more AI" but Human-in-the-Loop standards: clear boundaries for use, quality checks and decision logic that scales speed without increasing risk.

Typical Situations

AI delivers convincing answers, but hallucinations (fabricated content) or false sources go unnoticed

Teams adopt suggestions too quickly because the output looks like a solution and critical review gets skipped

Decisions become inconsistent because prompts, data bases or evaluation standards vary from person to person

Confidential information ends up in tools because governance (rules, approvals, boundaries) is missing

AI creates speed but more rework, because expectations, definitions of done and responsibilities aren't clear

What Changes

Higher reliability, because AI outputs are systematically verified (source check, counter-check, plausibility)

More robust decisions, because bias transfer (adopting distortions) and automation bias (over-trusting systems) are mitigated

Less rework, because quality thresholds, responsibilities and handovers are explicit

Faster execution, because AI is used where it genuinely has leverage and the rest stays clearly manual

Better traceability, because decisions and assumptions are documented rather than disappearing into chat logs

Tools & Standards

Human-in-the-Loop rules (when AI, when human, who carries responsibility)

Prompt standards (goal, context, constraints, output format, review criteria)

Quality checks (source check, counterarguments, comparison of multiple approaches)

Risk and data protection rules (which data may go in, which never may)

Governance-Light (approvals, tool list, roles, update and review routines)

Decision Briefing + AI component (which parts AI delivers, which must be reviewed)

For you:

-

AI can increase throughput, but it also increases the risk of false precision. Answers look complete even when central assumptions are missing, sources are unclear or uncertainty has not been made visible. Research on reasoning models suggests that accuracy drops as problem complexity increases, precisely where decisions become most costly, such as in prioritisation, strategy, risk assessment or stakeholder communication.

The decisive difference comes from standards. When it is clear what AI is used for, what counts as sufficient quality and which review steps are mandatory, AI stops being a novelty and becomes infrastructure. Human-in-the-Loop does not mean slower. It means speed with control. AI handles drafting, structure, variants and preliminary analysis, while humans cover evaluation, risk, accountability and the final call. Teams that embed human judgement into AI-assisted processes tend to make more robust and consistent decisions.

A frequently underestimated effect is cognitive debt. When teams outsource too much of their review competence and judgement practice, speed increases in the short term but errors, dependency and rework grow over time. Research suggests this effect sets in gradually because the loss of competence only becomes visible once it has already happened.

This is precisely why clear quality routines and governance make sense even in small setups. A few rules applied consistently are often enough to raise both benefit and safety at the same time. As employers increasingly expect AI and data skills, reflective decision-making becomes a prerequisite for responsible and effective AI use.

-

Bucher, U., Holzweißig, K., & Schwarzer, M. (2024). Künstliche Intelligenz und wissenschaftliches Arbeiten: ChatGPT & Co.: der Turbo für ein erfolgreiches Studium. Verlag Franz Vahlen.

Carucci, R. (2024, Februar 6). In The Age Of AI, Critical Thinking Is More Needed Than Ever. Forbes. https://www.forbes.com/sites/roncarucci/2024/02/06/in-the-age-of-ai-critical-thinking-is-more-needed-than-ever/

Chen, Y., Kirshner, S. N., Ovchinnikov, A., Andiappan, M., & Jenkin, T. (2025). A Manager and an AI Walk into a Bar: Does ChatGPT Make Biased Decisions Like We Do? Manufacturing & Service Operations Management, 27(2), 354–368. https://doi.org/10.1287/msom.2023.0279

Cheung, V., Maier, M., & Lieder, F. (2025). Large language models show amplified cognitive biases in moral decision-making. Proceedings of the National Academy of Sciences, 122(25), e2412015122. https://doi.org/10.1073/pnas.2412015122

Goddard, K., Roudsari, A., & Wyatt, J. C. (2012). Automation bias: A systematic review of frequency, effect mediators, and mitigators. Journal of the American Medical Informatics Association, 19(1), 121–127. https://doi.org/10.1136/amiajnl-2011-000089

Kosmyna, N., Hauptmann, E., Yuan, Y. T., Situ, J., Liao, X.-H., Beresnitzky, A. V., Braunstein, I., & Maes, P. (2025). Your Brain on ChatGPT: Accumulation of Cognitive Debt when Using an AI Assistant for Essay Writing Task (No. arXiv:2506.08872). arXiv. https://doi.org/10.48550/arXiv.2506.08872

Lee, H.-P. (Hank), Sarkar, A., Tankelevitch, L., Drosos, I., Rintel, S., Banks, R., & Wilson, N. (2025). The Impact of Generative AI on Critical Thinking: Self-Reported Reductions in Cognitive Effort and Confidence Effects From a Survey of Knowledge Workers. Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems, 1–22. https://doi.org/10.1145/3706598.3713778

Milkman, K. L., Chugh, D., & Bazerman, M. H. (2009). How Can Decision Making Be Improved? Perspectives on Psychological Science, 4(4), 379–383. https://doi.org/10.1111/j.1745-6924.2009.01142.x

Mosier, K. L., Skitka, L. J., Heers, S., & Burdick, M. (1998). Automation Bias: Decision Making and Performance in High-Tech Cockpits. The International Journal of Aviation Psychology, 8(1), 47–63. https://doi.org/10.1207/s15327108ijap0801_3

Risko, E. F., & Gilbert, S. J. (2016). Cognitive Offloading. Trends in Cognitive Sciences, 20(9), 676–688. https://doi.org/10.1016/j.tics.2016.07.002

Shojaee, P., Mirzadeh, I., Alizadeh, K., Horton, M., Bengio, S., & Farajtabar, M. (2025). The Illusion of Thinking: Understanding the Strengths and Limitations of Reasoning Models via the Lens of Problem Complexity (No. arXiv:2506.06941). arXiv. https://doi.org/10.48550/arXiv.2506.06941

Theodorakopoulos, L., Theodoropoulou, A., & Halkiopoulos, C. (2025). Cognitive Bias Mitigation in Executive Decision-Making: A Data-Driven Approach Integrating Big Data Analytics, AI, and Explainable Systems. Electronics, 14(19), 3930. https://doi.org/10.3390/electronics14193930

Scientific foundation: Evidence & Sources ↓

In a world of permanent information availability, what matters isn't quantity but quality of judgement. What holds up, what sounds plausible but is wrong, and what is simply unclear? In organisations, poor decisions rarely come from too little research. They come from weak or untested sources, missing evidence standards and false precision. The lever is a reliable evidence base and clear rules for handling uncertainty and communication.

Evaluating Information

Typical Situations

Claims sound convincing, but sources are weak, selective or not traceable

Numbers get presented without context (base rate, comparison group, effect size, uncertainty)

Studies, reports or expert opinions get mixed together without distinguishing quality (peer review, methodology, conflicts of interest)

Teams debate opinions because criteria for what counts as sufficient evidence are missing

Communication creates false certainty because uncertainty isn't named clearly, leading to rework or loss of trust later

What Changes

Greater decision confidence, because evidence is assessed and weighted against fixed criteria

Less exposure to misinformation, because typical manipulation patterns and faulty reasoning get spotted early

Better prioritisation, because uncertainty is made explicit and risks are assessed more realistically

Faster alignment, because teams share a common language for evidence, quality and what remains unclear

Stronger external impact, because statements are more precise, more robust and communicated consistently

Tools & Standards

Source Check (authority, data basis, methodology, conflicts of interest, currency)

Evidence Rubric (what counts as strong, moderate or weak, and why)

Uncertainty Standard (what is certain, what is unclear, what gets checked next)

Argument Check (faulty reasoning, causation vs. correlation, base rates, cherry picking)

Claim-Evidence-Confidence Standard (claim, supporting evidence, confidence scale)

Stakeholder Communication Template (core message, rationale, boundaries, next steps)

For you:

-

In a world of permanent information availability, what determines outcomes is not volume but the ability to assess quality. Information literacy is not a research technique. It is decision infrastructure. It separates robust evidence from plausible rhetoric and makes uncertainty manageable. In data-rich environments, mistakes arise because statements look too clean. Numbers without a baseline, studies without methodological context, expert quotes without conflicts of interest. This leads to misprioritisation, unnecessary loops and reputational risk.

A central mechanism is epistemic knowledge, an understanding of how knowledge is produced. Research shows that those who command criteria for evidence and uncertainty identify misleading claims more reliably and communicate more precisely. In teams, this has a double effect. It improves decision quality and reduces conflict, because it is no longer opinion against opinion but criteria against criteria.

The practical lever is a small set of consistently applied standards. A brief source and argument check, a shared format for uncertainty and a clean translation into stakeholder communication. Naming uncertainties transparently rather than smoothing them over builds trust and protects reputation, even when not everything is certain. This reduces research overhead while robustness increases. Decisions become more traceable, errors get spotted earlier and communication holds up under scrutiny.

For businesses, this means that trained information literacy improves the precision of professional decisions and strengthens the cognitive resilience of teams in a working environment where false precision and misinformation have real costs.

-

Brainard, J., Hunter, P. R., & Hall, I. R. (2020). An agent-based model about the effects of fake news on a norovirus outbreak. Revue d’Épidémiologie et de Santé Publique, 68(2), 99–107. https://doi.org/10.1016/j.respe.2019.12.001

Carucci, R. (2024, Februar 6). In The Age Of AI, Critical Thinking Is More Needed Than Ever. Forbes. https://www.forbes.com/sites/roncarucci/2024/02/06/in-the-age-of-ai-critical-thinking-is-more-needed-than-ever/

Cook, J., Ellerton, P., & Kinkead, D. (2018). Deconstructing climate misinformation to identify reasoning errors. Environmental Research Letters, 13(2), 024018. https://doi.org/10.1088/1748-9326/aaa49f

Eisenberg, M. B. (2008). Information Literacy: Essential Skills for the Information Age. DESIDOC Journal of Library & Information Technology, 28(2), 39–47. https://doi.org/10.14429/djlit.28.2.166

Feierabend, S., Rathgeb, T., Kheredmand, H., & Glöckler, S. (2022). JIM-Studie 2022: Jugend, Information, Medien. Basisuntersuchungen zum Medienumgang 12- bis 19-Jähriger. Medienpädagogischer Forschungsverbund Südwest (MPFS). https://www.mpfs.de/fileadmin/files/Studien/JIM/2022/JIM_2022_Web_final.pdf;

Fuchs, C. (2022). Verschwörungstheorien in der Pandemie: Wie über COVID-19 im Internet kommuniziert wird (1. Aufl.). utb GmbH. https://doi.org/10.36198/9783838557960

Hasebrink, U., Hölig, S., & Wunderlich, L. (2021). #UseTheNews: Studie zur Nachrichtenkompetenz Jugendlicher und junger Erwachsener in der digitalen Medienwelt. Arbeitspapiere des Hans-Bredow-Instituts. https://doi.org/10.21241/SSOAR.72822

Lewandowsky, S., Ecker, U. K. H., Seifert, C. M., Schwarz, N., & Cook, J. (2012). Misinformation and Its Correction: Continued Influence and Successful Debiasing. Psychological science in the public interest : a journal of the American Psychological Society, 13(3), 106–131. https://doi.org/10.1177/1529100612451018

Meßmer, A.-K., Sängerlaub, A., & Schulz, L. (2021). „Quelle: Internet“? Digitale Nachrichten- und Informationskompetenzen der deutschen Bevölkerung im Test (Stiftung Neue Verantwortung e. V, Hrsg.). Hochschule der Medien. https://doi.org/66090

Organisation for Economic Co-operation and Development. (2019). OECD Future of Education and Skills 2030—Conceptual learning framework—TRANSFORMATIVE COMPETENCIES FOR 2030. OECD. https://www.oecd.org/content/dam/oecd/en/about/projects/edu/education-2040/concept-notes/Transformative_Competencies_for_2030_concept_note.pdf

Sharon, A. J., & Baram‐Tsabari, A. (2020). Can science literacy help individuals identify misinformation in everyday life? Science Education, 104(5), 873–894. https://doi.org/10.1002/sce.21581

Sinatra, G. M., Kienhues, D., & Hofer, B. K. (2014). Addressing Challenges to Public Understanding of Science: Epistemic Cognition, Motivated Reasoning, and Conceptual Change. Educational Psychologist, 49(2), 123–138. https://doi.org/10.1080/00461520.2014.916216

Scientific foundation: Evidence & Sources ↓

Scientific Foundation

The studies below support not only the relevance of these domains but also the effectiveness of the approach itself. Structured decision frameworks, debiasing interventions and clear standards work reliably, including in professional settings.

Thinking and Judgement

Research shows that targeted reflection and training in metacognitive strategies significantly improve decision quality, reduce rumination and strengthen confidence in one’s own judgement. Even brief bias-awareness interventions lead to measurable improvements in decision behaviour (Kakinohana & Pilati, 2023; Larrick & Feiler, 2015; Morewedge et al., 2015).

Decision-Making in Organisations

Organisations with clearly defined decision frameworks and structured evaluation processes decide faster, more consistently and with higher quality. Bias-sensitive decision architectures measurably reduce rework, increase strategic coherence and strengthen trust in leadership and decision processes (Dean & Sharfman, 1996; Harvard Business Review, 2016, 2020a, 2020b; Larrick & Feiler, 2015; Milkman et al., 2009; Theodorakopoulos et al., 2025).

Team Communication and Collaboration

Structured reflection routines, clear decision frameworks and precise communication improve coordination, reduce rework and lead to higher-quality outcomes. Psychological safety and shared bias awareness measurably increase communication quality and decision speed (Curşeu & Schruijer, 2012; Jones & Roelofsma, 2000; Midtgård & Selart, 2025; Rutka et al., 2023).

Training and Interventions

Even short, evidence-based training formats lead to clear improvements in judgement and decision processes. Approaches that build bias awareness and metacognitive insight support durable changes in thinking and behaviour, particularly for specialists and leaders (Berthet, 2022; Lilienfeld et al., 2009; Midtgård & Selart, 2025; Morewedge et al., 2015; Siebert et al., 2021).

AI and Information Evaluation

As AI use increases, so does the risk of bias transfer and uncritical trust in model outputs. Studies show that AI systems can not only reproduce cognitive biases but amplify them. Structured human-in-the-loop checks and clear quality standards measurably reduce these risks (Cheung et al., 2025; Goddard et al., 2012; Lee et al., 2025).

-

Berthet, V. (2022). The Impact of Cognitive Biases on Professionals’ Decision-Making: A Review of Four Occupational Areas. Frontiers in Psychology, 12, 802439. https://doi.org/10.3389/fpsyg.2021.802439

Cheung, V., Maier, M., & Lieder, F. (2025). Large language models show amplified cognitive biases in moral decision-making. Proceedings of the National Academy of Sciences, 122(25), e2412015122. https://doi.org/10.1073/pnas.2412015122

Curşeu, P. L., & Schruijer, S. G. L. (2012). Decision Styles and Rationality: An Analysis of the Predictive Validity of the General Decision-Making Style Inventory. Educational and Psychological Measurement, 72(6), 1053–1062. https://doi.org/10.1177/0013164412448066

Dean, J. W., & Scharfman, M. P. (1996). Does Decision Process Matter? A Study of Strategic Decision-Making Effectiveness. Academy of Management Journal, 39(2), 368–396.

Goddard, K., Roudsari, A., & Wyatt, J. C. (2012). Automation bias: A systematic review of frequency, effect mediators, and mitigators. Journal of the American Medical Informatics Association, 19(1), 121–127. https://doi.org/10.1136/amiajnl-2011-000089

Jones, P. E., & Roelofsma, P. H. M. P. (2000). The potential for social contextual and group biases in team decision-making: Biases, conditions and psychological mechanisms. Ergonomics, 43(8), 1129–1152. https://doi.org/10.1080/00140130050084914

Kakinohana, R. K., & Pilati, R. (2023). Differences in decisions affected by cognitive biases: Examining human values, need for cognition, and numeracy. Psicologia: Reflexão e Crítica, 36(1), 26. https://doi.org/10.1186/s41155-023-00265-z

Larrick, R. P., & Feiler, D. C. (2015). Expertise in Decision Making. In G. Keren & G. Wu (Hrsg.), The Wiley Blackwell Handbook of Judgment and Decision Making (1. Aufl., S. 696–721). Wiley. https://doi.org/10.1002/9781118468333.ch24

Lee, H.-P. (Hank), Sarkar, A., Tankelevitch, L., Drosos, I., Rintel, S., Banks, R., & Wilson, N. (2025). The Impact of Generative AI on Critical Thinking: Self-Reported Reductions in Cognitive Effort and Confidence Effects From a Survey of Knowledge Workers. Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems, 1–22. https://doi.org/10.1145/3706598.3713778

Lilienfeld, S. O., Ammirati, R., & Landfield, K. (2009). Giving Debiasing Away: Can Psychological Research on Correcting Cognitive Errors Promote Human Welfare? Perspectives on Psychological Science, 4(4), 390–398. https://doi.org/10.1111/j.1745-6924.2009.01144.x

Midtgård, K., & Selart, M. (2025). Cognitive Biases in Strategic Decision-Making. Administrative Sciences, 15(6), 227. https://doi.org/10.3390/admsci15060227

Milkman, K. L., Chugh, D., & Bazerman, M. H. (2009). How Can Decision Making Be Improved? Perspectives on Psychological Science, 4(4), 379–383. https://doi.org/10.1111/j.1745-6924.2009.01142.x

Morewedge, C. K., Yoon, H., Scopelliti, I., Symborski, C. W., Korris, J. H., & Kassam, K. S. (2015). Debiasing Decisions: Improved Decision Making With a Single Training Intervention. Policy Insights from the Behavioral and Brain Sciences, 2(1), 129–140. https://doi.org/10.1177/2372732215600886

Siebert, J. U., Kunz, R. E., & Rolf, P. (2021). Effects of decision training on individuals’ decision-making proactivity. European Journal of Operational Research, 294(1), 264–282. https://doi.org/10.1016/j.ejor.2021.01.010

Theodorakopoulos, L., Theodoropoulou, A., & Halkiopoulos, C. (2025). Cognitive Bias Mitigation in Executive Decision-Making: A Data-Driven Approach Integrating Big Data Analytics, AI, and Explainable Systems. Electronics, 14(19), 3930. https://doi.org/10.3390/electronics14193930

The right starting point

Ready to get started? Choose your entry point or message me directly.

Ready to turn clarity into a system?